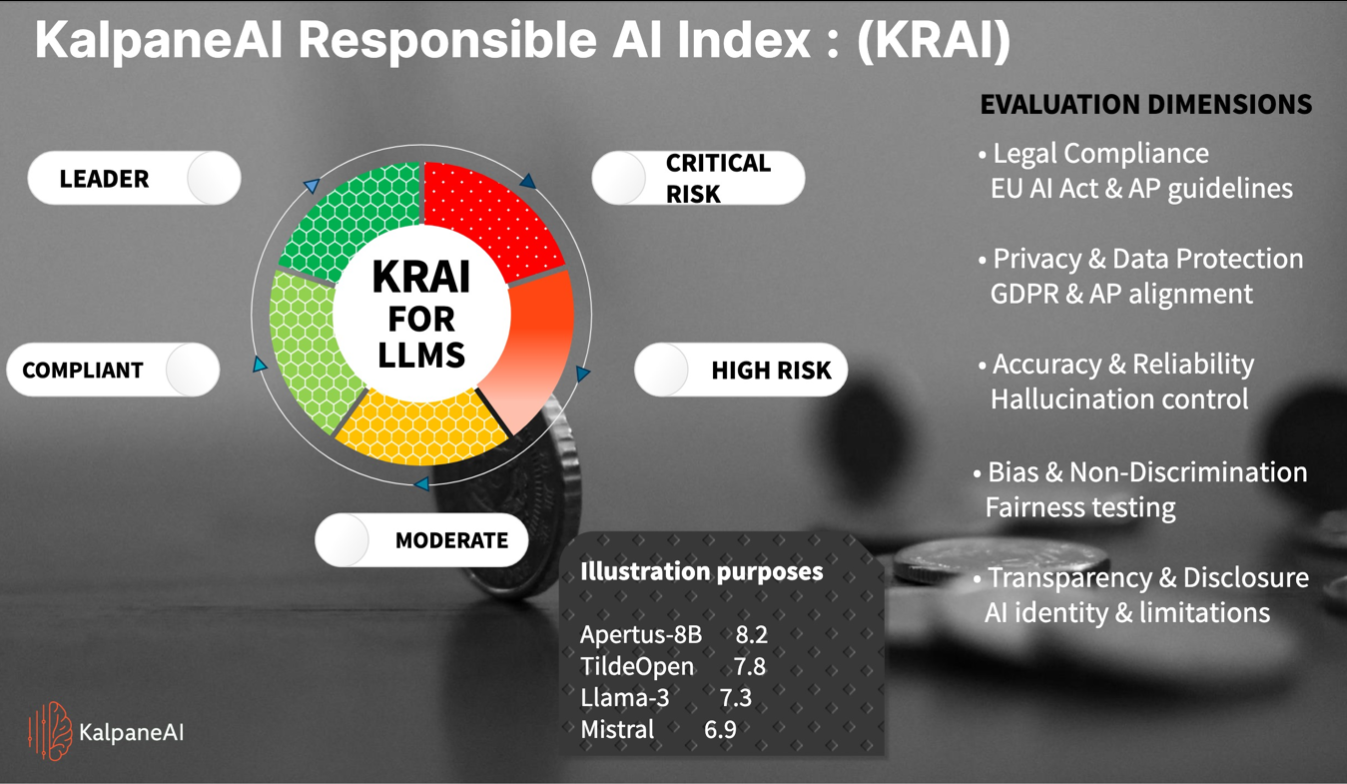

KRAI — KalpaneAI Responsible AI Index

A structured evaluation framework for comparing LLMs through a governance lens, not just a capability lens.

Moderate Tier

Models suitable for bounded use cases, but requiring higher caution and stronger safeguards.

Evaluation dimensions

- Legal compliance under EU AI Act and AP guidance

- Privacy and data protection alignment with GDPR

- Accuracy, robustness, and hallucination control

- Bias, non-discrimination, and fairness testing

- Transparency, disclosure, and AI limitations

Business relevance

- Creates a practical risk posture for enterprise AI adoption

- Supports safer selection of LLMs and GenAI tools

- Connects technical evaluation with regulatory accountability

- Helps boards and delivery teams speak a common governance language